Performance testing with NVMe storage and Spectrum Scale 5

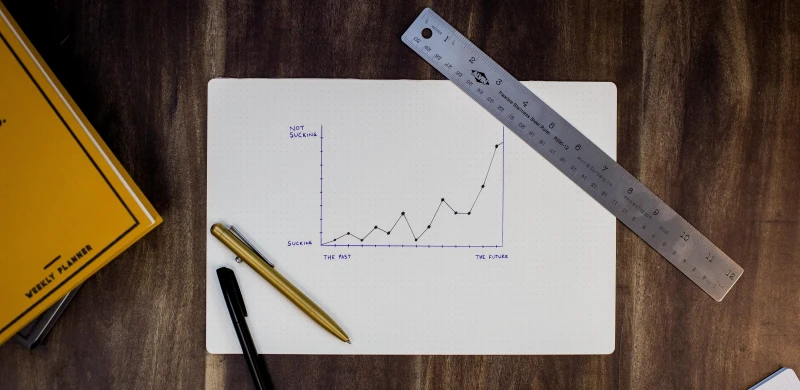

We have recently procured 120TB of NVMe based SSD storage from E8 Storage for the Apocrita HPC Cluster. The plan is to deploy this to replace our oldest and slowest provision of scratch storage. We have been performing extensive testing on this new storage as we expect it to offer new possibilities and advantages within the cluster.